The cancer research journal Oncogene issued on October 16th 2017 an Editorial on the topic of research integrity:

“The importance of being earnest in post-publication review: scientific fraud and the scourges of anonymity and excuses”.

The editorial contains a list of 8 common excuses dishonest authors used to escape responsibility for manipulated data. It was authored by David Sanders, virologist and professor at the Department of Biological Sciences at the Purdue University in West Lafayette, Indiana, US, as well as Justin Stebbing, professor of cancer medicine and oncology at the Imperial College London, UK, who is also one of the two Editors-in-Chief (EiC) of the journal Oncogene.

Sanders is one of these rare brave academics who is unafraid to call out scientific misconduct while his peers hide in the bushes and instead even point fingers at whistleblowers like him. As the newspaper USA Today wrote earlier this year, Sanders made himself a very powerful enemy, the star US cancer researcher with Italian origins, Carlo Croce:

“But that didn’t stop Sanders from alleging that Dr. Carlo Croce, a prominent cancer researcher at Ohio State University, falsified data or plagiarized text in more than two dozen articles Croce has authored. For the past two-plus years, Sanders has contacted scientific journals in which the articles appeared to alert them of his concerns. Earlier this month, he went more public with his claims in an investigative piece by the New York Times that delved into years of ethics charges against Croce.

“There are, and I anticipate there will be additional, consequences for my career,” Sanders said Tuesday afternoon while sitting in his office inside the Hockmeyer Hall of Structural Biology at Purdue.

This isn’t the first time Sanders has publicly accused a scientist of bad behavior. In 2012, Sanders had an article by a former colleague retracted on the basis that the colleague used their former deceased research partner’s data in the paper without permission”.

A long article appeared in The New York Times prior to that, “Years of Ethics Charges, but Star Cancer Researcher Gets a Pass“, detailing the case of Carlo Croce and the role of Sanders the whistleblower, and the Ohio State University, who were mostly covering up the affair. Croce hit back: he is now suing the newspaper, and in separate lawsuit, also Sanders at a New York court, as reported by Retraction Watch.

Hence, Sanders knows first-hand what research misconduct is and how to act upon it. Indeed, the editorial was his idea, and his co-author Stebbing joined afterwards. As Sanders wrote to me:

“The impulse for the editorial and the list was from me. We discussed the inclusion of particular items and how they were described together”.

Stebbing indeed is not much of a whistleblower, quite the opposite, he can be in fact seen as victim of such. His own publication was heavily criticised on PubPeer, for suspected western blot band duplications. And the piquant bit is: Stebbings, together with his first author Georgios Giamas (now Reader in Biochemistry at the University of Sussex, UK) offered on PubPeer explanations which sound very much as what he himself has been ridiculing in the Sanders & Stebbing editorial in his journal Oncogene.

[2.11.2017, 18:40: a section referring to a newspaper article dealing with clinical events was removed upon email request from Justin Stebbing. He announced to me also to address the PubPeer concerns and provide original gel images in the comment section below]

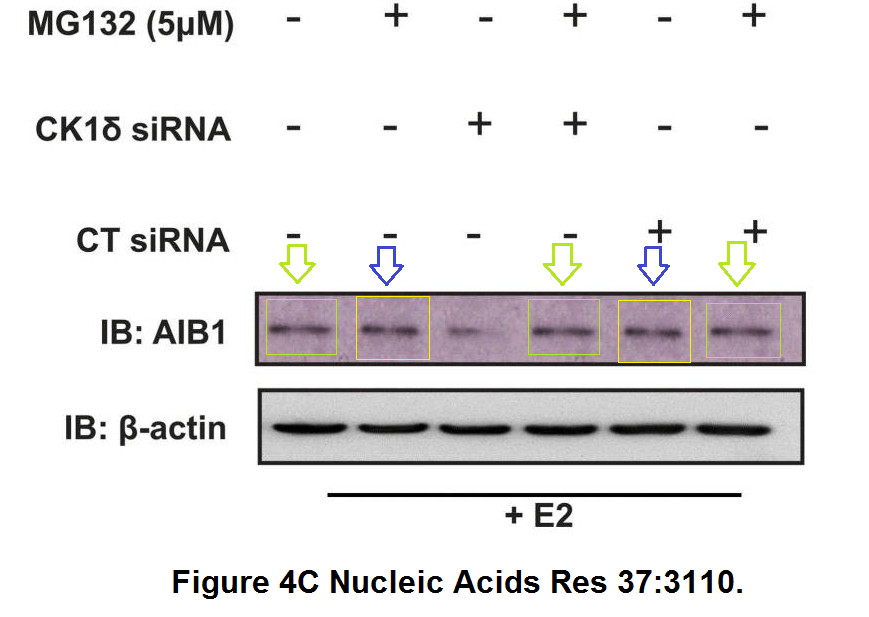

This was the above-mentioned paper:

Georgios Giamas, Leandro Castellano, Qin Feng, Uwe Knippschild, Jimmy Jacob, Ross S. Thomas, R. Charles Coombes, Carolyn L. Smith, Long R. Jiao, Justin Stebbing

CK1delta modulates the transcriptional activity of ERalpha via AIB1 in an estrogen-dependent manner and regulates ERalpha-AIB1 interactions

Nucleic Acids Research (2009) doi: 10.1093/nar/gkp136

The paper’s co-author Uwe Knippschild, professor at the surgery department of the University Clinic Ulm, Germany, who had some own papers flagged on PubPeer, commented:

“I also would like to thank you very much for your critical comments. I will contact the corresponding authors. Thereafter, I will reply as soon as possible“.

There was no follow up from Knippschild’s side about this paper, but Giamas and Stebbing did engage in a debate with their critics.

Now follows the list of the lame authors’ excuses from Sanders & Stebbing, Oncogene 2017 (copyright NatureSpringer), and the justifications offered by Giamas and Stebbing on PubPeer regarding their own paper in Nucleic Acids Research (NAR). The comparison is illustrated with PubPeer evidence.

i. ‘Nothing to see here. Move along.’ Even though the evidence of image duplication or plagiarism is in many cases overwhelming, some authors refuse to admit that there was any problem with their article.

Giamas and Stebbing on PubPeer that there is indeed no reason to suspect duplciations:

“Amongst the thousands gels / blots / immunofluorescence and other experiments that I (GG) have personally performed the last ~17 years (as I am sure it has happened for other scientists) there were occasions that certain results/data were indeed ‘strangely similar’ (i.e. protein bands running in an identical way, 2 different cells looking alike under the microscope, etc)”.

ii. ‘My dog ate the data.’ Certainly having the original data would help resolve the issues and clearly this excuse has greater validity as more time passes. But sometimes the image manipulation/plagiarism is so evident, that the lack of the original data cannot be an exonerating circumstance.

The Imperial College mandates: “Primary data is the property of Imperial College and should remain in the laboratory where it was generated for as long as reference needs to be made to it and for no less than ten years“. Giamas and Stebbing suggested however on PubPeer that the 8 year-old data might be unavailable:

“Unfortunately, as you can imagine, due to the fact that these data are quite old, it will be very difficult (if not impossible) to trace the original blots and recall who exactly was involved in the execution of the specific experiments”.

iii. ‘If you look hard enough, you can find a trivial difference between two supposedly duplicated images.’ First, the standard should be how likely is it that two images could be so similar and yet have distinct origins. Artifacts that can introduce small differences can occur during image processing. Also, different exposures of the same data can produce apparent image differences; again the standard should be about the probability of similarity.

According to Stebbing, similar-shaped bands can indeed be produced by chance, when the same blotting apparatus is used (in fact, their German colleague Roland Lill suggested the same very recently, also on PubPeer). As Giamas and Stebbing explained on PubPeer:

“In this case, whether this was due to a technical issue (for example, the quality of the SDS-PRECAST-GEL used, or whether during the semi-dry blot transfer something went wrong, or something else…), as I mentioned before it is difficult to be 100% certain as it is impossible to recall how the exact blot(s) were executed ~8 years ago. Interestingly, we recently had an incident using a semi-dry blot device, where there was a problem with the upper stainless-steel plate (surface/electrodes) of the equipment. As a result, ALL our membranes were coming up with the same ‘background’ signal (noise) that affected the proper visualisation/analysis of the proteins run in different lanes (there was actually a sort of an identical spotted (marked) pattern in all of the protein bands making them look ‘strangely similar’ (whether this was due to something that was previously stuck in the steel surface, or a problem with the specific part affecting the current flow, heat generation etc…”.

iv. ‘It was only a control experiment.’ How many scientists have not had an unexpected result in a ‘control’ experiment that actually led to some insight? If control experiments were unimportant, why were they included in the article in the first place? Connected to this sophistry is: ‘The data duplication does not affect the results.’ The said error may not affect the main conclusions of the research but all data presented should be considered results. Moreover, identified errors, especially if they occur more than once in a single paper or in several papers by the same author(s) undermine the trust of the Editors in any results presented by the author(s). See the Lady Bracknell quotation.

v. ‘It was the fault of a junior researcher.’ This could very well be true. It is sad when the research of a laboratory group is undermined by one unscrupulous person. However it remains to be asked, how did such obvious image duplications escape the attention of the other co-authors? To qualify as an author of a paper one must have approved the final version. If research misconduct was not identified then this does not reflect well on the integrity of, and care and attention paid by the co-authors.

vi. ‘The responsible researcher is from another country and therefore unfamiliar with the standards expected in scientific publications.’ First, of course, this argument is highly insulting to the many researchers from other countries who do not engage in such activities. Second, if a laboratory director is concerned about the understanding of standards by researchers in one’s group from other countries, then one is responsible for inculcating the proper values into those researchers and displaying an extra level of scrutiny of their products. Again, see the Lady Bracknell quotation.

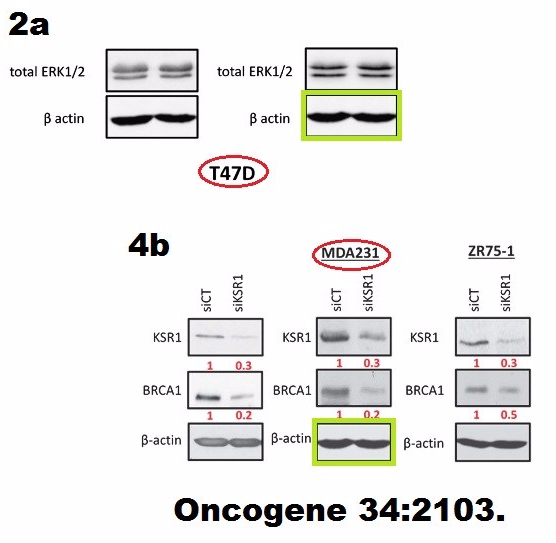

A reply Giamas left on PubPeer regarding his common paper with Stebbing in Oncogene (Stebbing et al 2015) covered all 3 above points 4-6. It assigned the blame for a failed loading control to a junior co-author from China:

“Regarding the ‘actin’ labelling in the KSR1 paper, our previous postdoc student working on this project (Dr Hua Zhang) has confirmed that this is a mislabelled blot. Indeed this actin blot is representative of the T47D cell line (and NOT the MDA231). However, the relative MDA231 blot looks relative similar, meaning that equal and therefore comparable protein amounts have been loaded and therefore the interpretations/conclusions are the same (as most actin blots should look like following proper bradford quantification). Apparently, during the preparation of the supl. figure, he accidentally used the wrong actin blot and we apologise for this“.

vii. ‘The results have been replicated by ourselves or others, so the image manipulation is irrelevant.’ Data are included in an article for a reason. Science is based upon a certain level of trust, but it is not all-encompassing. If the data do not represent the experiments described, then that trust has been violated, and no rationalization about final outcomes should affect judgment about the culpability of the authors.

Indeed, Giamas and Stebbing opened their defence on PubPeer with declaring that all results were reproduced, which probably means any eventual data manipulations become utterly irrelevant:

“First of all, let me confirm that we have repeated these experiments (as we do for every single one) at least 3x times; therefore, the results/conclusions presented in this manuscript are valid and reproducible”.

viii. ‘Someone is out to get me.’ Perhaps true but irrelevant. By implying that if not for the fact that one was being targeted, the behavior would be considered acceptable, one traduces the entire scientific community. Such practices are neither common nor worthy of toleration.

Possibly in a similar vein, Giamas commented on PubPeer:

“I feel honoured (in a way) that you spent your time, going through all my publications to date. Thank you for pointing out things that potentially require further clarification/corrections.

I can assure you that we will carefully look into these ASAP and proceed with any corringedum / erratum requested by the respective journal.

More importantly, I want to re-emphasise the accuracy and scientific integrity of our published data/results, using the specific protocols, reagents, etc employed and referenced in each case”.

Giamas and Stebbing already had to correct a paper Melaiu et al PLOS One 2014 they authored collaboratively with another lab from Italy, for microscopy image duplication (below).

The Sanders & Stebbing editorial contains this bit, one wonders if Stebbing put it in, with his own papers in mind:

“Some accusations are clearly false, but it is the responsibility of the journal to investigate all allegations made. A few of the excuses listed above may occasionally be valid in some context”.

Certainly he will ruthlessly investigate the issues in Stebbing et al 2015, being the Editor-in-Chief of the journal Oncogene where it was published. And as for his paper in Nucleic Acids Research: the journal showed little evidence of any interest to do anything at all about a similarly problematic case from France. “Nothing to see here. Move along.”

Update 3.11.2017.

I exchanged several emails with Justin Stebbings, where he indicated to be inclined to share the original gel scans. In the email one he forwarded to me this communication he received from an editor of Nucleic Acids Research:

“I have finally taken the time to review your response. Thank you for taking the time to produce such a detailed report and for repeating the experiments. We are satisfied with your response and the evidence provided and agree that the figures have not been unethically altered. We now consider this case closed”.

Update 24.11.2020

Obviously nobody cares about data manipulation, Giamas is now even Imperial Medicine visiting professor, so this says it all. But Stebbing is in growing trouble over his activities as a doctor to wealthy celebrities. Back when this article was first published, he wrote to me asking to remove a mention of this 2017 Telegraph article, about him being investigated by General Medical Council (GMC).

Stebbing asked me not to reference from that news piece so that my readers won’t learn that he “has had practising privileges permanently withdrawn by some Harley Street clinics“, that he attempted “to give chemotherapy to a patient close to end of life, against the advice of colleagues“, and that he “treated patients at the London Claremont Clinic with an immunotherapy drug called pembrolizumab” while charging extra payment, prescribing the drug “indiscriminately” and with an “absence of trial data and clinical assessments”. This is why

“HCA, the country’s largest private care provider, has informed Prof Stebbing’s patients that from September they will no longer be allowed to receive treatment from a man who has published more than 550 peer-reviewed papers and been nicknamed “God” by some former patients.“

GMC also placed Stebbing “under ongoing investigation over his fitness to practise and ruled he must be supervised in all of his clinical posts until November” 2018. This becomes relevant again because BMJ reported in September 2020 an update on the GMC investigation that Stebbing

“faces allegations at a medical practitioners tribunal of failing to provide good clinical care to 11 patients between March 2014 and March 2017. […] The allegations, involving privately paying, terminally ill patients, include lack of informed consent,overestimation of the prognosis of treatment, poor judgment on the dosages and timings of treatments, acting outside national guidelines, and failing to take on board the concerns of colleagues.”

Donate!

€5.00

Many experimental techniques involving image can be easily falsified unfortunatly. Images can be falsified while an WB is being developed or a microscope image is being captured as well. In this case is even much more difficult to detect the fraud. Perhaps the solution goes through automation with recorded pre-defined settings

LikeLike

or that editors bother to look at what is presented.

LikeLike

Wait, there might be more:

http://www.telegraph.co.uk/news/2017/06/16/high-profile-cancer-surgeon-nicknamed-god-faces-care-inquiry/

LikeLike

a paper from a decade ago in a mediocre journal – what depths we go to here!

LikeLike

NAR a mediocre journal? 8 years ago, not 10.

LikeLike

Pingback: PLOS One publishes near-copy of retracted JBC paper, sans coauthor Carlo Croce – For Better Science

Pingback: "Policy breakfast" - Ocasapiens - Blog - Repubblica.it

Pingback: Edinburgh breaks silence to announce Stancheva retractions – For Better Science

Pingback: Former KI rector Dahlman-Wright: stones in a glass house – For Better Science

Pingback: Eric Lam: shady research at Imperial to cure breast cancer – For Better Science

Could Justin Stebbing take a look at the data in this paper?

Oncogene. 2006 May 11;25(20):2860-72.

Fhit modulation of the Akt-survivin pathway in lung cancer cells: Fhit-tyrosine 114 (Y114) is essential.

Semba S1, Trapasso F, Fabbri M, McCorkell KA, Volinia S, Druck T, Iliopoulos D, Pekarsky Y, Ishii H, Garrison PN, Barnes LD, Croce CM, Huebner K.

Author information

Comprehensive Cancer Center and Department of Molecular Virology, Immunology, and Medical Genetics, The Ohio State University, Columbus, 43210, USA.

See:-

https://pubpeer.com/publications/DCE5512C156A3C82EF17B5DB7F88A9#4

and

https://pubpeer.com/publications/DCE5512C156A3C82EF17B5DB7F88A9#8

LikeLike

Pingback: For Better Science

Pingback: Opera Buffa di Guido Kroemer a La Scala – For Better Science

Pingback: University of Nebraska: Honesty, it’s not for everyone – For Better Science

Pingback: George Iliakis, the pride of Ruhrgebiet – For Better Science

Pingback: Michael HotTiger of Zurich, patron of biomedical ethics – For Better Science

Pingback: Gregg Semenza: real Nobel Prize and unreal research data – For Better Science

Pingback: Antonio Giordano and the Sbarro Pizza Temple – For Better Science

Pingback: The English science supremacy – For Better Science

8th March 2021 Expression of Concern FOR one of 2 Eics Oncogene, Justin Stebbing.

Journal referred matter to home institution, Imperial College, London.

Nucleic Acids Res. 2009 May;37(9):3110-23. doi: 10.1093/nar/gkp136. Epub 2009 Apr 1.

2021 expression of concern.

https://academic.oup.com/nar/advance-article/doi/10.1093/nar/gkab172/6163096

Editorial Expression of Concern on article ‘CK1δ modulates the transcriptional activity of ERα via AIB1 in an estrogen-dependent manner and regulates ERα–AIB1 interactions’

Published: 08 March 2021

On 01 April 2009, NAR published the article ‘CK1δ modulates the transcriptional activity of ERα via AIB1 in an estrogen-dependent manner and regulates ERα–AIB1 interactions’ by Georgios Giamas, Leandro Castellano, Qin Feng, Uwe Knippschild, Jimmy Jacob, Ross S. Thomas, R. Charles Coombes, Carolyn L. Smith, Long R. Jiao, and Justin Stebbing (1).

Following comments published on PubPeer, the Editors were first alerted by the Authors in 2017, and more recently by a Reader, that the blots depicted in several figures show unusual levels of similarity.

Details have been published in PubPeer: https://pubpeer.com/publications/1CCAC58543784D1B17C8416A6D97C2

The Authors have not been able to provide the original data. Therefore, following COPE guidelines, we are referring this to the Authors’ institution. The Editors advise Readers to examine the details of this study with particular care.

Keith R. Fox, Barry L. Stoddard

Senior Executive Editors

REFERENCES

1. Giamas G., Castellano L., Feng Q., Knippschild U., Jacob J., Thomas R.S., Coombes R.C., Smith C.L., Jiao L.R., Stebbing J. CK1delta modulates the transcriptional activity of ERalpha via AIB1 in an estrogen-dependent manner and regulates ERalpha-AIB1 interactions. Nucleic. Acids. Res. 2009; 37:3110–3123.

LikeLike

https://www.medscape.com/viewarticle/947323?src=

Leading Oncologist Admits Giving Chemo to Patient Too Sick to Receive It

Ian Leonard

March 12, 2021

MANCHESTER—Leading oncologist Professor Justin Stebbing has admitted giving chemotherapy treatment to a patient who was too sick to receive it and “approaching the end of the road”, a medical tribunal heard.

LikeLike

https://retractionwatch.com/2021/03/25/editor-who-opined-on-author-excuses-has-paper-subjected-to-an-expression-of-concern/#more-121790

LikeLike